When I started cross-checking humanizer outputs against detectors, I quickly realized one tool wasn’t enough. The competition is good — I use it constantly, and I’ve cross-checked hundreds of content samples with it. But it doesn’t catch copied content. It doesn’t scan whole websites. And it definitely doesn’t flag the guy who’s been copy-pasting from competitor blogs.

That’s where Originality.ai helps fill a real gap. It’s a tool designed for AI detection and copy scanning, rolled into one — a single AI detector that handles both machine generation detection and copy scanning at once. For content agencies and publishers drowning in AI-written content submissions — that combination matters.

I’ve been using Originality.ai alongside other detectors to verify humanizer outputs from AI writing tools like Undetectable AI, HumanizerPro, and others. Here’s my honest Originality.ai review — what it does well, where it frustrates me, and who should actually pay for it.

What Is Originality AI and Who Actually Needs It in 2026?

Originality.ai is a SaaS content quality platform built for content teams — primarily agencies, publishers, and search marketing shops — that need to verify content that’s machine-generated or human-written, and whether it’s plagiarized. It’s not really built for individual bloggers or students, though they use it. The sweet spot is a team that processes a lot of content and needs a single platform to run quality checks at scale.

The AI checker packs more into one dashboard than most people realize when they first sign up. detection of machine-generated text is the headline feature, but it comes bundled with a copy scanner, readability scorer, fact-checking flags, grammar/spelling checks, a Chrome extension for in-editor use, and a site-wide URL scanner. That last one is genuinely useful if you’re auditing a website before buying it on Flippa — I haven’t seen any competitor push that use case, but it’s real.

Detection Features and Suite Beyond AI Detection

Here’s what Originality.ai actually includes:

- AI Detector vs AI Humanizer Tools

- Best AI Detector Tools

- AI Content Detection — classifies content as AI-generated text or human-written text (check for plagiarism too), works across ChatGPT, Claude, Gemini, LLaMA, and other popular models and AI tools

- Plagiarism Scanner — scans for copied or paraphrased content with claimed ~99.5% accuracy on complex patterns

- Readability Scoring — grades clarity and structure

- Fact Checker — flags potential hallucinations and factual errors (useful, not foolproof)

- Website Scanner — bulk-scan entire domains by URL, not just individual documents

- Team Accounts — role-based access, shared reports, tagging for collaborative workflows

- API Access — for developers building automated content pipelines

- Chrome Extension + WordPress Plugin — check content inside Google Docs or the WP editor

That’s a lot packed in. Whether you need all of it depends entirely on your workflow.

Who This Tool Is Actually Built For

Honestly — it’s built for content agencies and publishers who process articles at volume. If you’re managing a team of writers and need to verify every piece of content, check for duplicate content, and run detection before it goes live, the bundle of detection plus copy checking in one dashboard is a genuine time-saver. You’re not switching between Copyscape and that other detection tool for every piece.

Search marketing and content teams get value here too — especially with the site-wide scanner for content audits. And if you’ve ever been thinking about buying a content site, scanning the entire domain for machine-generated content before you wire money is… probably a good idea in 2026.

Individual creators? Less compelling. The credit-based pricing model gets expensive fast at low volume, and you lose the economies of scale that make it worthwhile for agencies.

How Its Detection Technology Actually Works

Originality.ai uses a BERT-based detection model — a transformer architecture trained to identify patterns between AI and human content, essentially learning what separates them at their core. When AI models generate text, they produce word sequences that follow predictable probability distributions. BERT-based detectors look for those signatures.

The problem (which this tool shares with every other detector) is that polished human writing — formal academic prose, highly edited editorial content — can look statistically similar to output. That’s not a flaw in the detector design, it’s an inherent limitation of the approach. Structured writing patterns resemble machine-generated patterns.

Lite vs. Turbo vs. Multilingual — Which Detection Model Should You Use?

Originality.ai’s detection platform offers three detection models, which most reviews completely ignore — and it’s where Originality looks noticeably different compared to other detection tools. Here’s the practical breakdown:

| Model | Best For | Speed | Credit Cost |

|---|---|---|---|

| Lite | Quick checks, high-volume scanning | Fastest | Lower |

| Turbo | Accuracy-focused checks on important content | Moderate | Higher |

| Multilingual | Non-English content | Varies | Higher |

For day-to-day content QA, Lite is fine. For high-stakes checks — editorial pieces you’re about to publish under your brand — use Turbo. If you’re running non-English content, the Multilingual model reduces (but doesn’t eliminate) the accuracy drop on foreign-language text.

Can It Be Bypassed by AI Humanizers?

Yes. Heavily edited or humanized synthetic content can lower scores on any detector, including Originality.ai. Popular writing and rewriting tools like Undetectable AI, QuillBot’s AI paraphraser, and StealthGPT specifically advertise bypassing ai detection — and in testing, they do reduce scores meaningfully.

That said, Its scanning models update as humanizer tools evolve. Modern AI generation is an arms race. The score on humanized content today might look different six months from now as the model retrains on newer patterns.

I Tested Originality AI Against Real Content — Here’s What Actually Happened

During my initial testing session, I used the same base content I ran through all the humanizer tools in my broader detection testing series: a 300-word academic essay written by Claude 3.5 Sonnet. Same prompt (“Write a 300-word academic essay about the impact of social media on mental health”), same output, tested across multiple detectors and humanizers.

Test 1 — Raw Claude 3.5 Sonnet Output

Raw, unedited generated text from Claude: The tool flagged it as AI immediately — high probability. The platform correctly identified content generated by AI — a language model output. This is what this kind of detection tool is built for: detect AI at its most obvious. Raw output — unedited, AI generated text — is low-hanging fruit. No surprise there — raw ChatGPT or Claude output is easy for any decent tool to detect. The patterns are too clean — AI even at a basic output level is fairly obvious to a trained model. The formatting was too clean, the sentence structure too predictable.

This is the easy test. Every best detector on the market passes it.

Test 2 — Humanized AI Content (The Harder Question)

After running the Claude essay through several humanizers, I checked those outputs through Originality.ai. Results were mixed in ways that aligned with what I found using a second detector as a cross-reference:

Outputs run through Undetectable AI on its “Undetectable” mode scored lower (more human-like) on the platform.ai — consistent with Undetectable AI being the strongest humanizer in my testing. StealthGPT’s output, which the comparison tool flagged at 100% AI, also scored high on Originality.ai’s detection scan. WriteHuman’s output, despite claiming 100% human on its own internal scanner, showed elevated probability on Originality.ai too.

The key takeaway: no single humanizer tool reliably beats both major detectors and Originality.ai simultaneously. The tools that fool one detector often get flagged by another — which is exactly why running multiple tools to detect AI matters.

Test 3 — Human-Written Articles (The False Positive Problem)

This is where it gets frustrating. I ran several clearly human-written pieces through Originality.ai — polished, edited blog posts and essays — confirming content is human-written — and got elevated scores on some of them. Not consistently, but enough that I’ve seen Originality flags on legitimate human prose — a real concern for any writer who works in a structured, formal style.

The rate of false positives is a documented issue. Independent tests put The tool’s overall accuracy at 76–94% depending on content type, with accuracy dropping on polished human writing. Originality.ai claims ~99% accuracy with low false positives internally — but that figure doesn’t hold up against real-world tests on diverse content types.

The sentence-level highlighting you get from other detection tools lets you see exactly which paragraphs are flagging. Originality gives you an overall Originality score for the document but less granular breakdown. For writers disputing a false positive, that difference matters a lot.

Does Originality AI’s Plagiarism Scanner Compete With Copyscape?

For a content agency already paying for Originality.ai, the built-in plagiarism checker is a genuine bonus — you’re running both scans in one run, saving time and cost compared to separate tools.

The plagiarism detection engine covers copied and paraphrased content, not just exact matches. That matters because most plagiarists aren’t copy-pasting verbatim — they’re paraphrasing and hoping it misses it. Originality.ai catches a good chunk of that.

Where it falls short: English-only support (multilingual copy detection is limited), no Microsoft Word integration, and the database isn’t as deep as Copyscape’s web crawl index. For pure similarity checking at scale — especially on international content — Copyscape still holds edges for pure copy detection. But as a bundled plagiarism scanner alongside detection? It’s good enough for most content teams.

Feature Breakdown — What You’re Actually Paying For

Website-Wide Scanner: Genuinely Useful for Site Buyers

This is an underappreciated feature. You paste a domain URL and the tool will crawl and scan all pages for machine-generated content and scan all indexed pages for AI-generated content. If you’re buying a content website — checking whether the seller used AI to produce 400 blog posts before you pay five figures for the domain — this is worth the subscription alone.

No other popular AI detection tool has pushed this use case. It’s a real editorial workflow advantage that Originality gets credit for.

Fact and Readability Features in Practice

Editorial teams that use AI in their content workflow will find the fact scanner useful — it flags claims that might be hallucinations or outdated information. It’s not perfect and requires human verification of the flags. Think of this content checker feature as a second pair of eyes that catches obvious errors, not a replacement for editorial judgment.

The readability scorer gives your content a grade score similar to the Flesch-Kincaid index. For content teams focused on search rankings trying to hit a specific reading level, it’s handy to have this bundled in rather than running a separate tool.

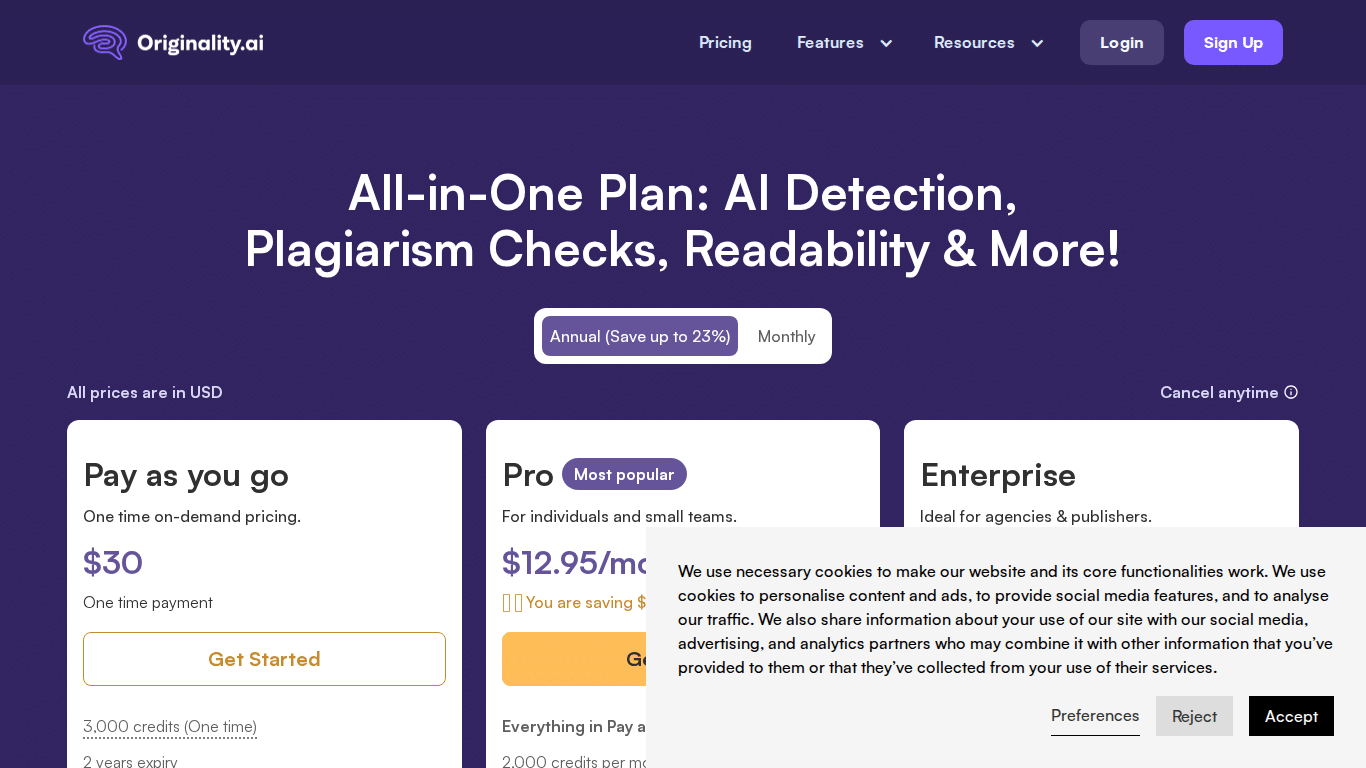

Pricing 2026 — What the Credit System Actually Costs You

The credit system is the most confusing part of Originality.ai. Competitors don’t explain it well either — which is part of why this section exists. Here’s how it actually works:

- 1 credit ≈ 100 words when running detection-only scans

- 1 credit ≈ 50 words when running detection plus copy scanning simultaneously

- Fact checks consume more credits per word

- Credits expire monthly on subscription plans (unused credits don’t roll over)

Pay-As-You-Go vs. Monthly Subscription: Full Breakdown

| Plan | Credits | Cost | Best For |

|---|---|---|---|

| Pay-As-You-Go | 3,000 credits | ~$30 one-time | Occasional users, single projects |

| Basic/Pro Subscription | ~2,000 credits/month | ~$12.95–$14.95/mo | Regular users, small teams |

| Enterprise | 15,000+ credits/month | ~$136+/mo | Agencies, large content teams |

Real Credit Math — What Scanning 30 Articles Per Month Actually Costs

Let’s do the math nobody else does. Say you’re a content team publishing 30 articles per month at ~1,500 words each. That’s 45,000 words.

detection-only scanning: 450 credits per month. Subscription plan easily covers this — you’re not even using half your 2,000 credit allowance.

AI detection + plagiarism combined: 900 credits per month (since each check covers ~50 words per credit). Still within the base subscription.

Where costs scale fast: if you’re running full-site scans on large domains, processing thousands of articles in bulk, or using fact-checking heavily. Enterprise-grade usage will push you into the $136+/month tier quickly.

Annual billing reduces costs further. No prominent discount code is available at this time — the annual plan itself offers the best savings currently.

Vs. The Competition: Two Tools, Two Different Jobs

I use both in my testing workflow, so I’ll just say it directly: they’re not competing for the same use case, even though they’re both detection tools.

| Feature | Originality.ai | Competitor |

|---|---|---|

| AI Detection | ✅ Strong on unedited text | ✅ Strong, sentence-level highlighting |

| Plagiarism Checking | ✅ Bundled | ❌ Not available |

| Sentence-Level Flags | Limited | ✅ Highlighted paragraphs |

| Website-Wide Scanner | ✅ | ❌ |

| Team/Agency Features | ✅ Strong | Limited |

| Academic Integrity | Good | ✅ Better for educators |

| Free Tier | Very limited | More generous |

| Best Fit | Agencies, publishers | Educators, individual creators |

If you want granular feedback on which sentences are AI-generated — say, you’re an editor reviewing a freelancer’s submission and need to explain exactly what looks suspicious — that sentence-level highlighting from the comparison tool is better. Read our full GPTZero review for a deeper look.

If you’re running bulk scans across a content operation, need copy detection bundled in, or want to audit an entire website — Originality is the better fit. It’s an AI detector tool with agency-scale infrastructure that few competitors match.

What Originality AI Gets Right (And What Needs Work)

After months of use — here’s where I actually stand on it.

The things it gets right: Detection and plagiarism in one dashboard is a real time-saver — you’re not juggling two different tools for every content submission. The site-wide URL scanner is unique — no other tool offering advanced AI detection at this price point does this. Team management features are genuinely solid for agencies with multiple editors. API access is production-ready for developers. And honestly? Support has been responsive when I’ve had questions.

Where it frustrates me: The false positive rate on polished original content is a bigger problem than the company acknowledges. Running human-generated content through and getting an AI flag is frustrating for any writer. I’ve had well-edited human articles come back with elevated scores — not consistently, but enough times that I’d never use Originality.ai as the only gate in an editorial process. You need a second check.

No sentence-level highlighting is the other thing. Other tools highlight the exact paragraphs that look AI-generated. Originality.ai gives you an overall score and… that’s mostly it. If a writer pushes back on a false flag, you can’t point to anything specific. That’s a workflow problem.

The credit math is also more confusing than it needs to be. Most people don’t realize that running both AI scanning and plagiarism at the same time doubles your credit consumption. That catches a lot of new users off guard on their first billing cycle.

Originality AI Alternatives Worth Considering in 2026

If Originality.ai doesn’t fit your workflow, here are the realistic alternatives — each with a different strength:

- GPTZero — Better for educators and individual creators. Sentence-level highlighting is more actionable. No copy scanning, but the free tier is generous and the accuracy is comparable to most synthetic content detection tools. It’s my go-to when I need to detect AI content at the sentence level.

- Winston AI — High detection rate claims, solid for cross-platform publishers. Less established track record than Originality.ai but competitive on accuracy metrics.

- Copyleaks — Better for multilingual content and enterprise-scale copy detection. Supports more languages than Originality.ai and has stronger institutional integrations.

- Turnitin — The standard for academic integrity. Deep academic paper database, LMS integrations. Not designed for editorial teams — overkill (and expensive) for agencies.

For most content agencies deciding between tools like Originality and its main rival — the honest answer is: use both for important content. They catch different things. They’re not that expensive when stacked against the cost of publishing AI content that tanks your SEO.

Who Should Actually Use Originality AI in 2026?

Buy it if you’re: A content agency processing 20+ articles/week and need AI detection and copy scanning in one tool. A publisher auditing freelancer submissions at scale. An search-focused content team that wants readability + AI detection bundled. A site buyer doing due diligence before acquiring a content website.

Look elsewhere if you’re: An individual blogger or student (free detector tier options or one-off Copyscape checks are more cost-effective). An educator needing academic integrity enforcement (academic tools have institutional integrations this platform doesn’t). A developer wanting the most accurate AI detector for fine-grained sentence analysis (a more granular competitor API).

For the best humanizer tools that specifically test against Originality.ai and other detectors, check my full comparison guide.

Review — Frequently Asked Questions

More AI Detector & Verification Guides

Continue through the AI detector silo with our tested reviews, coupon guide, and related humanizer benchmarks.

Is Originality AI accurate?

Originality accuracy ranges from 76–94% in independent testing, depending on content type. It’s strongest on raw, unedited AI-generated content. detection accuracy drops on polished human writing (false positives) and non-English text. The vendor claims ~99% accuracy, but that reflects best-case internal testing conditions.

Is it worth it for individual bloggers?

Probably not. The credit-based pricing and feature set are optimized for teams and agencies running bulk scans. Individual bloggers are better served by their free tier or one-off Copyscape content checks. Originality.ai’s value scales with content volume.

Can you bypass Originality AI with humanizers?

Yes — advanced humanizers can reduce detection scores on Originality.ai. Tools like Undetectable AI (Undetectable mode) showed the lowest probability scores in testing. However, content that bypasses Originality.ai often still gets flagged by competing scanners or other detectors. No humanizer consistently beats all detectors simultaneously — and building an accurate AI detector that keeps up with humanizers is an ongoing challenge.

How does the credit system work?

1 credit covers 100 words for AI detection only, or 50 words when running AI detection and originality checks simultaneously. Credits expire monthly on subscription plans. The Pay-As-You-Go plan gives 3,000 credits for ~$30. Subscription plans start at ~$12.95/month for ~2,000 credits.

Is Originality AI a scam?

No, the idea that Originality AI is a scam is false — it is a legitimate tool with a genuine user base in content agencies and publishing. The common complaint is false positives on human writing, not fraud. The company is a real SaaS startup active since the early 2020s with verified customer support and active development.

How does Originality AI compare to alternatives?

Academic tools like Turnitin serve institutions with deep integrations into university platforms and a vast academic paper database. This tool is built for content marketing teams and agencies. If you need academic integrity enforcement with LMS integration, academic tools win. If you need bulk Detection plus plagiarism for web content, Originality.ai is the better fit.